Introduction To Azure Kubernetes Service

On this article, we’ll study Azure Kubernetes Service briefly and undergo a step-by-step course of to deploy a Kubernetes Cluster in Azure, study to scale the cluster, improve it, after which additionally study to delete it after utilization and is now not in want.

Allow us to think about a situation the place builders are making ready for a demo. On this state of affairs, there come up points with totally different machines not with the ability to operate because the software program was with the unique machine. “It was working in my machine” – is the most typical dialogue we hear. In an effort to cater to this problem, we had a requirement of a correct system. In a nutshell, if an software has been constructed and is utilized in one other system it may not operate correctly within the new setting. The important thing options that tackle this drawback are, Digital Machines and Containers. Study Digital Machines from the earlier article, Azure Compute.

Containers

Containers could be outlined as a unit of software program that’s executable the place the appliance codes are packaged with the wanted dependencies and libraries such that it may be run the place ever wanted from cloud to on-premises providers. You may study extra about Containers and briefly about container orchestration from the earlier article, Containers And Container-Orchestration In Azure.

Container Challenges

Supply: CNCF 2020 Survey Report

We are able to see majority agree that complexity is the most important problem with deploying containers. Thus, this isn’t a simple matter by any means and could be overwhelming. Nevertheless it additionally means, we are able to make it simpler as we perceive it a bit higher.

Variations between Digital Machines and Containers

| Digital Machines (VM) | Containers |

| A digital machine is the emulation or virtualization of a complete pc system. The Digital Machines (VM) performs like a bodily pc system altogether. | Containers could be outlined as a unit of software program that’s executable the place the appliance codes are packaged with the wanted dependencies and libraries such that it may be run the place ever wanted from cloud to on-premises providers. |

| The complete pc system is virtualized. | Solely the Working System is virtualized. |

| The scale of VM is extraordinarily massive. | The scale of Containers is extraordinarily gentle ranging to only a few megabytes. |

| Eg: Azure Digital Machine, Vmware, and so forth. | Eg: Docker |

Advantages of Utilizing Containers

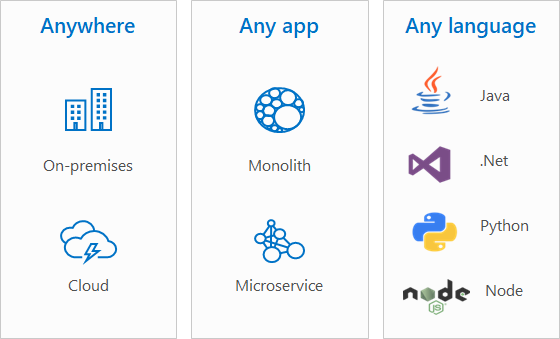

There’s a enormous advantage of utilizing containers. It helps the usage of totally different working system, each on-prem and cloud providers in addition to can be utilized from purposes with each monolith and microservices structure. Furthermore, a number of languages from Java, .NET, Python, Node are all supported too. Principally, any OS, anyplace, for any varieties of software structure programmed in any Language.

Docker

Docker is a platform that helps to improvement, ship, and run purposes by delivering software program in packages which might be often called containers.

Kubernetes

Kubernetes supplies the service to automate deployment, scale, and handle our containerized purposes simply. This open-source container-orchestration software program was developed and launched by Google in 2014 and is at present seemed over by Cloud Native Computing Basis (CNCF). With the rise of microservices, there has additionally been a surge in the usage of containers and thus the container orchestration – Kubernetes.

Kubernetes is an orchestration software and it permits excessive availability or no downtime. Furthermore, it additionally supplies scalability and excessive efficiency in addition to helps catastrophe restoration – ie. backup and restore. Extra about Catastrophe Restoration and Enterprise Continuity right here.

At current, the analogy of Cattle vs Pet has been crucial to know to comprehend how Kubernetes needs to be used. The conference is that Kubernetes needs to be approached and handled like Cattle and never like pet. The analogy comes from the concept pets are individually extraordinarily necessary whereas cattle could be interchanged. Thus, the notion of shifting the manufacturing infrastructure into the cattle strategy helps create excessive availability, diminished failure, and a speedy catastrophe restoration system with minimal downtime. Kubernetes has now grow to be the gold customary for container orchestration primarily as a result of it permits DevOps engineers to deal with the containers like cattle.

Fundamental Structure of Kubernetes

Supply: Kubernetes

The Cluster of Kubernetes structure consists of Nodes, Management Airplane, Controllers, Cloud Controller Supervisor, and Rubbish Assortment.

Node

A node is usually a bodily or digital machine and is dependent upon the cluster. The workload is run by Kubernetes by putting the containers into Pods to run on these nodes. Therefore, these containers are inside and are working on the nodes. We are able to discover out the standing of the Node from the next command.

kubectl describe node <insert-node-name-here>

Management Airplane

The Management airplane runs the cluster, and it consists of the next.

- API Server

- Controller Supervisor

- Scheduler

- Etcd

The API sample on which node to regulate airplane communicates is thru “hub-and-spoke”. The API usages from the nodes terminates at this API Server whereas for the communication from management airplane to node – there are two communication paths. One is from the API Server to the Kubelet Course of that runs on node within the cluster and one other is from API server to any node, service or pod by the API Server’s proxy performance.

Digital Community between the management airplane and node

Furthermore, SSH tunnels are supplied by Kubernetes with the intention to defend communication paths between management airplane and node. As of now, SSH Tunnels has been deprecated and TCP degree proxy has substituted it.

Monolith vs Microservice

You may study extra about Monolith and Microservice structure, from the earlier article.

| Monolith | Microservice |

| It’s a conventional strategy to design software program. | It’s comparatively a contemporary strategy. |

| A single-tiered software program program designed in a way the place information entry and consumer interface are all mixed in a single particular program on a single platform. | The appliance tiers are managed independently within the microservice structure, every as separate items such that the items are within the totally different repositories every having its allotted assets for processor and reminiscence. |

| All the appliance tiers lie in the identical unit and are managed in a singular repository sharing system assets resembling processor and reminiscence. | The APIs are well-defined to attach the items to one another. |

| The programming language used for your complete software additionally depends on just one and the product is launched utilizing a single binary. | The complete software may use a number of programming languages and are launched utilizing every particular person binary. |

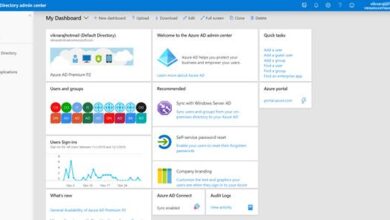

Azure Kubernetes Service (AKS)

Azure Kubernetes Service supplied the power to handle and deploy our containerized software with full Kubernetes Service. It provides CI/ CD – Built-in Steady Integration and Steady Supply, serverless structure, and enterprise-level safety for our purposes. It supplies options for Microservices, DevOps, and even help to coach Machin Studying Fashions. Azure Kubernetes Service simplifies the deployment, administration and operation of Kubernetes. Furthermore, deployment and administration of Kubernetes could be performed with ease together with software made accessible to scale and run with confidence. Moreover, the Kubernetes setting is secured and containerized software improvement is accelerated. Builders and DevOps engineers can work how they need with open-source instruments and APIs as it’s made extraordinarily handy to arrange CI/CD in only a few clicks with AKS.

The weather of orchestration,

- Scheduling

- Affinity / Anti-affinity

- Well being Monitoring

- Failover

- Scaling

- Networking

- Service Discovery

- Coordinated App Upgrades

Azure Container Situations (ACI)

With Azure Container Situations, it’s made straightforward to run the containers on Azure with out managing servers. Containers could be run with out managing servers and the agility is elevated together with the purposes secured by hypervisor isolation. Extra about ACI in Containers and Container Orchestration.

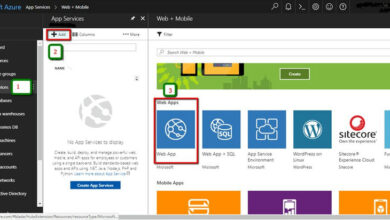

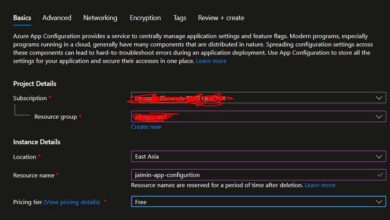

How will we Deploy a Kubernetes Cluster in Azure?

We are able to deploy a Kubernetes Cluster in Azure by merely following the under steps. All these instructions are executed in Azure CLI.

Create a useful resource group

az group create

Create AKS cluster

az aks create

Hook up with Cluster

az aks get-credentialscommand

Now, the appliance could be Run.

How can we scale a Cluster?

The next tutorial works on Azure CLI model 2.0.53 and later variations. In an effort to test the present model of your Azure CLI, run az –model.

To see the quantity and state of pods,

kubectl get nodes

With these instructions, there’ll be an output that showcases front-end and back-end pod.

NAME READY STATUS RESTARTS AGE

azure-vote-back-2549590172-4d2r5 1/1 Working 0 29m

azure-vote-front-840927080-tf34m 1/1 Working 0 28m

To handle change,

kubectl scale –-replicas=eight deployment/azure-vote-front

This command scales the front-end pods to eight.

Confirm with,

kubectl get pods

This command verifies AKS efficiently and creates the extra pods with replace to the cluster.

kubectl get pods

READY STATUS RESTARTS AGE

azure-vote-back-2606967446-nmpcf 1/1 Working 0 15m

azure-vote-front-3309479140-2hfh0 1/1 Working 0 5m

azure-vote-front-3309479140-bzt05 1/1 Working 0 3m

azure-vote-front-3309479140-fvcvm 1/1 Working 0 2m

azure-vote-front-3309479140-hrbf2 1/1 Working 0 11m

azure-vote-front-3309479140-qphz8 1/1 Working 0 4m

azure-vote-front-3309479140-mnsf2 1/1 Working 0 1m

azure-vote-front-3309479140-rtyz8 1/1 Working 0 3m

azure-vote-front-3309479140-uwqf2 1/1 Working 0 6m

azure-vote-front-3309479140-poiz8 1/1 Working 0 2m

Automation

Horizontal pod autoscaling is supported by Kubernetes. Use the cluster autoscaler for automation functions.

How will we replace a cluster?

To begin with, discover out concerning the updates which might be obtainable.

az aks get-upgrades

Instance

az aks get-upgrades --resource-group myResourceGroup --name myAKSCluster

And, when you’ll be able to improve;

To improve

az aks improve

--resource-group myResourceGroup

--name myAKSCluster

--kubernetes-version KUBERNETES_VERSION

Subsequent, Validate the improve by checking with az aks present command.

az aks present --resource-group myResourceGroup --name myAKSCluster --output desk

Title Location ResourceGroup KubernetesVersion ProvisioningState Fqdn

------------ ---------- --------------- ------------------- ------------------- ----------------------------------------------------------------

myAKSCluster eastus myResourceGroup 1.19.1 Succeeded myaksclust-myresourcegroup-23da35-rd59a4dh.hcp.eastus.azmk8s.io

Lastly, to delete the cluster

az group delete --name myResourceGroup --yes --no-wait

Conclusion

Thus, on this article, we discovered briefly about Azure Kubernetes Providers, Containers, variations between digital machines and containers, the advantages of utilizing containers, and about Docker. Then we dived into Kubernetes, its primary structure, and differentiated briefly about Monolith and Microservice. Lastly, we went deep into Azure Kubernetes Service and discovered to deploy Kubernetes cluster in Azure, scale the cluster, improve it, after which discovered to delete it too.