What Is Azure Knowledge Lake

What’s a knowledge lake?

An information lake is the place huge quantities of uncooked information or information in its native format are saved. In contrast to a knowledge warehouse which shops the information in information or folders (a hierarchical construction), information lakes present limitless area to retailer information, unrestricted file measurement, and plenty of alternative ways to entry information, together with offering the instruments essential for analyzing, querying, and processing. In a knowledge lake, every information merchandise is assigned with a novel identifier and metadata tags. On this approach, the information lake could be queried for related information and that smaller set of related information could be analyzed. Additionally, information will also be saved in information lakes earlier than being curated and moved to a knowledge warehouse.

Examples of a number of the forms of information that may be saved in a knowledge lake embody:

- Knowledge generated by people (e.g. blogs, emails, Tweets)

- Knowledge generated by machines (e.g. log information, Web of Issues, sensor readings)

- Operational information (e.g. ticketing, stock, gross sales)

- Pictures, audio, and video

Previous to the event of Hadoop, a set of open supply packages and procedures which can be utilized in huge information operations, it was solely potential for resource-rich corporations akin to Google and Fb to reap the advantages of knowledge lakes. Nevertheless, with the emergence of Hadoop, information lakes turned rather more accessible to a variety of organizations which may now retailer and course of their huge information.

Knowledge lakes are used to offer massive quantities of detailed supply information which is then used for a wide range of information analytics together with mining, graphing, clustering, and statistics. The outputs from information analytics embody churn fashions, estimates, visualizations and identification of buyer segments, all of which could be useful to companies and organizations.

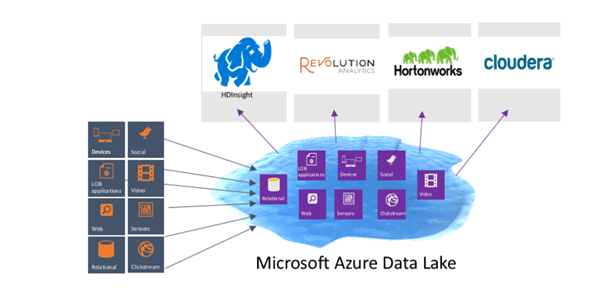

The Azure Knowledge Lake is a Hadoop File System (HDFS) and permits Microsoft Providers akin to Azure HDInsight, Revolution-R Enterprise, trade Hadoop distributions like Hortonworks and Cloudera, all to hook up with it. Azure Knowledge Lake has all Azure Lively Listing options together with Multi-Issue Authentication, conditional entry, role-based entry management, software utilization monitoring, safety monitoring and alerting.

Azure Knowledge Lake has no mounted limits on how a lot information could be saved in a single account. It will probably additionally retailer very massive information with no mounted limits to measurement. Because of this Azure Knowledge Lake can help massively parallel queries in order that Hadoop and superior analytics could be run on all the information within the information lake. Moreover, Azure Knowledge Lake can deal with excessive volumes of small writes at low latency which suggests it’s excellent for situations such because the Web of Issues (IoT), web site analytics, and analytics from sensors, amongst others.

Providers

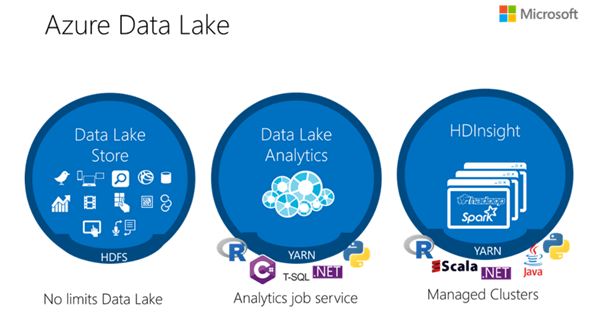

Azure Knowledge Lake embody three companies:

- Azure Knowledge Lake Retailer

- Azure Knowledge Lake Analytics

- Azure HDInsight

Azure Knowledge Lake Retailer is a fully-distributed, scalable and cost-effective resolution for large information analytics, permitting processing and analytics to be carried out throughout platforms and languages with information of any form, measurement, and pace. It retains information separate from compute and permits entry to information, whether or not or not a cluster is working. A number of clusters can entry the identical storage so information could be simply shared.

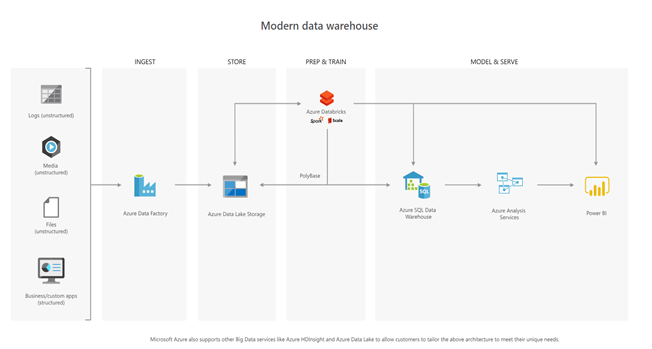

It integrates with different Azure information companies, together with Azure Databricks and Azure Knowledge Manufacturing unit and likewise works with current IT investments for id, administration, and safety, thus enabling the organizations to simply construct end-to-end huge information and superior analytics options.

Azure Knowledge Lake Retailer has excessive ranges of safety together with encryption of knowledge at relaxation and storage account firewalls. It additionally makes use of Azure Lively Listing for authentication and Entry Management Lists to handle entry to information held within the information lake.

You may perform the next operations utilizing Azure Knowledge Lake Retailer’s accessible languages and interfaces:

- Account Administration Operations– Azure Powershell, .NET SDK, REST API, Python.

- Filesystem Operations– Azure Powershell, Java SDK, .NET SDK, REST API, Python.

- Load and transfer information– Azure Powershell, Azure Knowledge Manufacturing unit, AdlCopy (Storage Blob to Lake retailer), Distcp (HDInsight storage cluster), Sqloop (Azure SQL Database), Azure Import/Export Service (for giant offline information), SSIS (utilizing the Azure characteristic pack).

The diagram beneath reveals a number of the choices potential with the Knowledge Lake Retailer.

Some great benefits of Azure Knowledge Lake Storage over Azure Storage Blobs embody:

- Optimized for parallel processing

- No file measurement or storage limits

- Safety is built-in with Azure Lively Listing.

Azure Knowledge Lake Analytics is a cloud-based, distributed information processing structure and is predicated on YARN, the identical because the Hadoop platform. It permits processing of very massive information units, integration with current warehousing and parallel processing of each structured and unstructured information. Knowledge Lake Analytics works with Azure Knowledge Lake Retailer and Azure Storage blobs, Azure SQL Database and Azure Warehouse. Azure Knowledge Lake Analytics is obtainable solely as a platform service by Microsoft which signifies that you gained’t should take care of any cluster issues and also you gained’t should handle safety individually.

Azure Knowledge Lake Analytics can be utilized for the next, amongst others:

- Processing information scraped from web sites.

- Getting ready information to be inserted into a knowledge warehouse.

- Processing unstructured imaging information.

Azure Knowledge Lake Analytics makes use of U-SQL. This language means that you can effectively analyze information within the retailer in addition to in relational shops, such because the Azure SQL Database. U-SQL works with any form of information, whether or not it’s structured or unstructured. For instance, it could possibly additionally deal with:

- Operations over a set of information with patterns.

- Utilizing Partitioned Tables.

- Federated Queries towards Azure SQL DB.

- Encapsulating your U-SQL code with Views, Desk-Valued Capabilities, and Procedures.

- SQL Windowing Capabilities.

- Programming with C# Person-defined Operators (customized extractors, processors).

- Advanced Sorts (MAP, ARRAY).

- Utilizing U-SQL in information processing pipelines.

- U-SQL in a lambda structure for IoT Analytics.

Knowledge Lake Analytics will also be a cheap choice as you solely pay on a per-job foundation when the information is being processed. You may have a pay-as-you-go or a month-to-month pre-pay plan. For normal utilization, a month-to-month plan is probably the most cost-effective.

Establishing a Knowledge Lake Analytics operation includes the next steps:

- Create a Knowledge Lake Analytics account

- Put together the supply information. You could have both an Azure Knowledge Lake Retailer account or Azure Blob storage account.

- Develop a U-SQL script.

- Submit a job (U-SQL script) to your Knowledge Lake Analytics account. The job reads from the supply information, processes the information as instructed within the U-SQL script, after which saves the output to both a Knowledge Lake Retailer or Blob storage account.

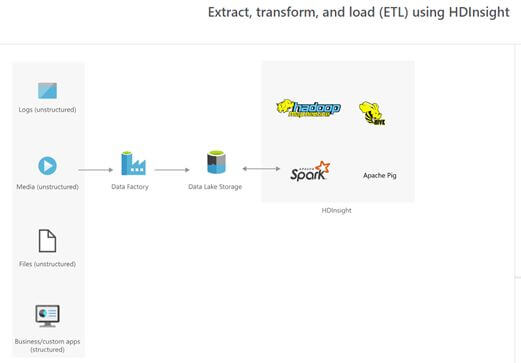

Azure HD Perception is a Hadoop service providing hosted in Azure that permits clusters of managed Hadoop cases, delivering Hadoop on high of the Azure platform. Azure HDInsight offers a software program framework which is designed to handle, analyze, and report on huge information. You may create a number of clusters to fulfill the wants of various jobs, which could be scaled up and down as wanted.

Azure HDInsight has 4 primary forms of workloads: ETL/ELT which makes use of a Hadoop cluster, Web of Issues or information in movement with a Storm cluster, transactional processing which makes use of HBase and information science or information analytics which makes use of a Spark or R-server with Spark cluster kind.

Azure HDInsight has assured excessive availability at massive scale with SLAs of 99.9 %. HDInsight screens the well being of your huge information purposes and recovers routinely from failures. Additionally, you possibly can choose from greater than 30 common Hadoop and Spark purposes which ADInsight then deploys to the cluster. Alternatively, you possibly can construct Hadoop/Spark purposes utilizing improvement instruments akin to Visual Studio, Eclipse or IntelliJ, Notebooks, akin to Jupyter or Zeppelin or languages, together with Scala, Python, R or C# and frameworks, akin to Java or .NET.

HDInsight additionally integrates with different Azure companies akin to Knowledge Manufacturing unit and Knowledge Lake Storage, which lets you construct complete analytics pipelines. Moreover, HDInsight can allow you to simply meet compliance requirements because it contains encryption and integration with Azure Lively Listing.

You should utilize Azure HDInsight to,

- Create huge information options and companies that are powered by Hadoop.

- Monitor and handle Hadoop clusters.

- Present report statistics on the provision and use of huge information.

If you wish to be taught extra concerning the info on this article., listed here are some nice hyperlinks so that you can begin with!

Official documentation for Azure Knowledge Lake

Microsoft labs for Azure Knowledge Lake

Video – Azure Knowledge Lake