DataOps On Azure

Dataops has turn out to be a current development these years whereas constructing knowledge & analytics resolution on public cloud platforms like Azure, AWS & GCP, and so forth. Dataops elevates one of the best practices of Devops & Information Engineering to construct the information platforms on cloud.

On this article, we are going to discover about what’s the distinction between devops & dataops, dataops for knowledge engineering on Azure.

Distinction between Devops & Dataops

Devops engineers deal with creating & delivering the software program system whereas dataops focuses on constructing, testing, and releasing the information options.

However CI/CD pipelines for Dataops & Devops has completely different supply life cycles.

Devops life cycle focuses on,

- Steady Integration with Construct pipelines

- Steady Deployment with Launch pipelines

- Steady Testing to enhance knowledge high quality

Dataops life cycle focuses on,

- CI/CD pipelines for software deployment

- Guarantee Related knowledge & associated parts are current & configured

- Monitor authenticated & approved entry to knowledge

Dataops for Information Engineering on Azure

Dataops helps knowledge engineering to implement the end-to-end orchestration pipelines together with the information platform parts & software code (Python, spark, and so forth) & setting particular info.

It helps knowledge engineers to effectively collaborate with the information stakeholders to realize scalability, reliability, and agility.

Main steps concerned in constructing the dataops pipelines on Azure are as follows,

Datazones in Azure Information Lake

Most enterprise organizations observe under technique to handle datazones within the Azure Information Lake ADLS Gen2.

- Uncooked Information Retailer

- Information Cleaning & Transformation Retailer

- Aggregated Information Retailer

Automated Information Validation & High quality Checks utilizing Azure Information Manufacturing unit & Databricks

We will use databricks notebooks to create automated knowledge validation & high quality test utilizing programming languages like python, scala, pyspark, and so forth.

As a way to use the sequence of databricks notebooks within the logical order utilizing Azure Information Manufacturing unit as an orchestrator.

Git Integration for Code Growth

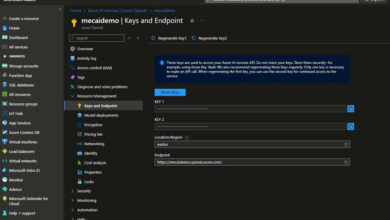

Whereas doing the event with databricks notebooks and knowledge manufacturing facility code then combine the azure providers with Git integration instruments like Azure Devops, Bit bucket and so forth to keep up the code versioning & centralized code repository.

Steady Integration & Deployment for Information Engineering workloads

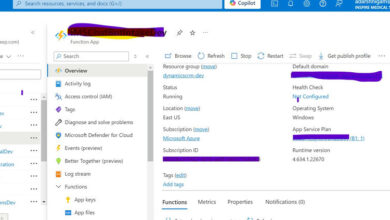

The perfect apply is to combine Azure Devops with CI/CD pipeline which can obtain the artifacts from the Azure Devops repo, carry out steady testing to make sure the standard of the code. As soon as the testing is profitable then launch pipeline will guarantee that deployment to all environments might be executed mechanically.

Dataops permits knowledge engineers to effectively develop the code guaranteeing the standard of the code and cut back time to marketplace for the appliance growth.