Multi-language identification and transcription in Video Indexer

Multi-language speech transcription was not too long ago launched into Microsoft Video Indexer on the Worldwide Broadcasters Convention (IBC). It’s obtainable as a preview functionality and clients can already begin experiencing it in our portal. Extra particulars on all our IBC2019 enhancements might be discovered right here.

Multi-language movies are widespread media belongings within the globalization context, world political summits, financial boards, and sport press conferences are examples of venues the place audio system use their native language to convey their very own statements. These movies pose a novel problem for firms that want to offer automated transcription for video archives of enormous volumes. Automated transcription applied sciences count on customers to explicitly decide the video language prematurely to transform speech to textual content. This handbook step turns into a scalability impediment when transcribing multi-language content material as one must manually tag audio segments with the suitable language.

Microsoft Video Indexer gives a novel functionality of automated spoken language identification for multi-language content material. This resolution permits customers to simply transcribe multi-language content material with out going by means of tedious handbook preparation steps earlier than triggering it. By that, it will possibly save anybody with giant archive of movies each money and time, and allow discoverability and accessibility situations.

Multi-language audio transcription in Video Indexer

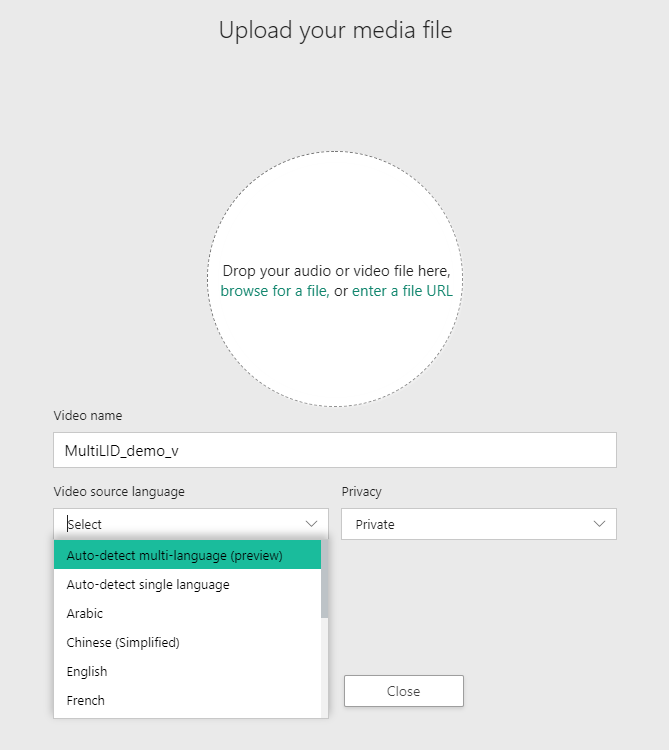

The multi-language transcription functionality is accessible as a part of the Video Indexer portal. Presently, it helps 4 languages together with English, French, German and Spanish, whereas anticipating as much as three completely different languages in an enter media asset. Whereas importing a brand new media asset you possibly can choose the “Auto-detect multi-language” possibility as proven beneath.

Our utility programming interface (API) helps this functionality as effectively by enabling customers to specify ‘multi’ because the language within the add API. As soon as the indexing course of is accomplished, the index JavaScript object notation (JSON) will embody the underlying languages. Discuss with our documentation for extra particulars.

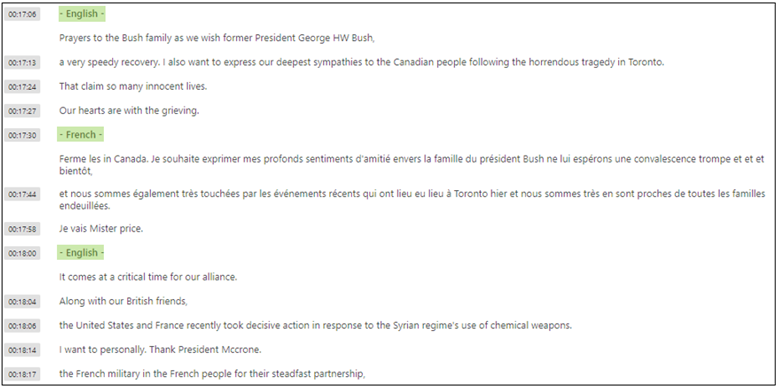

Moreover, every occasion within the transcription part will embody the language through which it was transcribed.

Prospects can view the transcript and recognized languages by time, bounce to the particular locations within the video for every language, and even see the multi-language transcription as video captions. The outcome transcription can be obtainable as closed caption information (VTT, TTML, SRT, TXT, and CSV).

Methodology

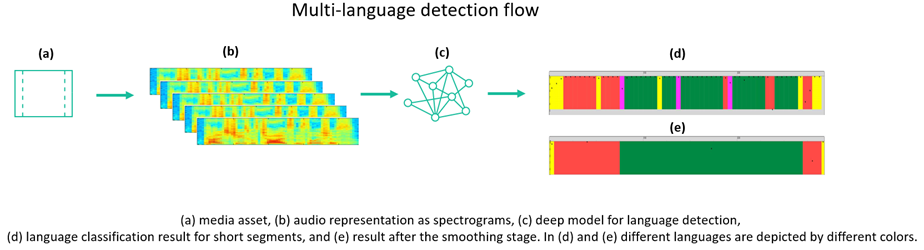

Language identification from an audio sign is a fancy activity. Acoustic surroundings, speaker gender, and speaker age are amongst quite a lot of components that have an effect on this course of. We symbolize audio sign utilizing a visible illustration, resembling spectrograms, assuming that, completely different languages induce distinctive visible patterns which might be realized utilizing deep neural networks.

Our resolution has two predominant levels to find out the languages utilized in multi-language media content material. First, it employs a deep neural community to categorise audio segments with very excessive granularity, in different phrases, only a few seconds. Whereas a superb mannequin will efficiently determine the underlying language, it will possibly nonetheless miss-identify some segments resulting from similarities between languages. Due to this fact, we apply a second stage for inspecting these misses and easy the outcomes accordingly.

Subsequent steps

We launched a differentiated functionality for multi-language speech transcription. With this distinctive functionality in Video Indexer, you possibly can turn out to be more practical concerning the content material of your movies because it lets you instantly begin looking out throughout movies for various language segments. Through the coming few months, we might be bettering this functionality by including assist for extra languages and bettering the mannequin’s accuracy.

For extra data, go to Video Indexer’s portal or the Video Indexer developer portal, and do that new functionality. Learn extra concerning the new multi-language possibility and the best way to use it in our documentation.

Please use our UserVoice to share suggestions and assist us prioritize options or e-mail visupport@microsoft.com with any questions.