Extract File Names And Copy From Supply Path In Azure Information Manufacturing facility

Introduction

In the present day we’ll be taught a real-time state of affairs on easy methods to extract the file names from a supply path after which use them for any subsequent exercise primarily based on its output. This is likely to be helpful in instances the place we now have to extract file names, remodel or copy information from CSV, excel, or flat recordsdata from blob, and even if you wish to keep a desk report which explains from the place does the information got here from.

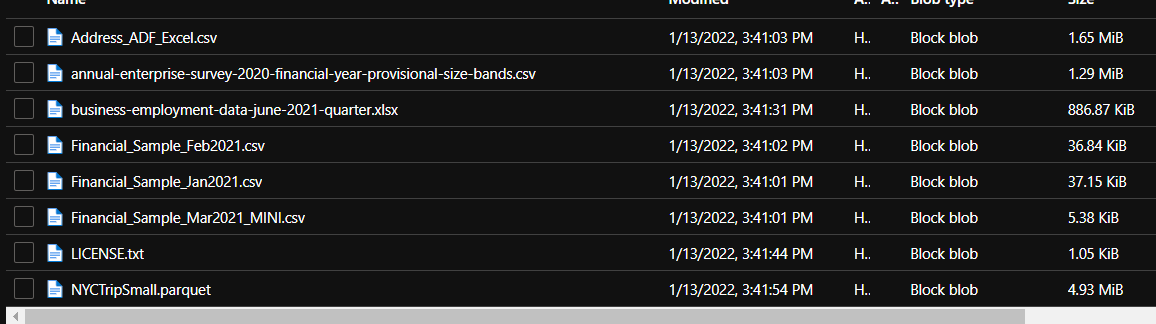

As a primary step, I’ve created an Azure Blob Storage and added just a few recordsdata that may used on this demo.

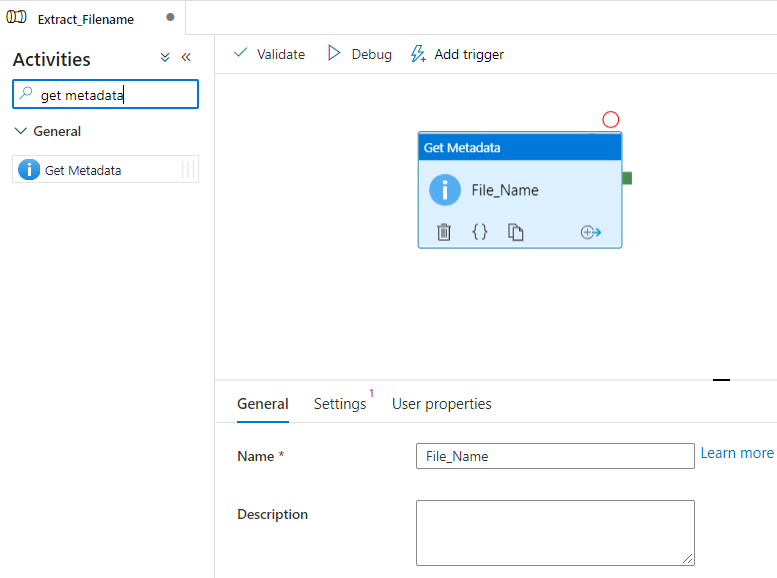

Exercise 1 – Get Metadata

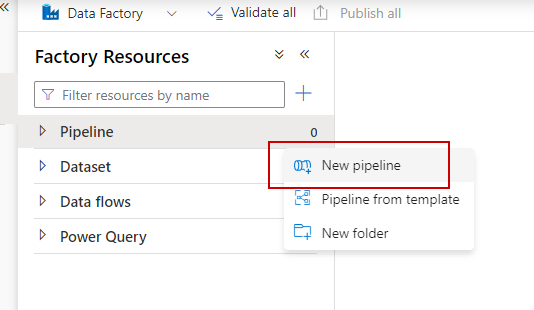

Create a brand new pipeline from Azure Information Manufacturing facility

Subsequent with the newly created pipeline, we are able to use the ‘Get Metadata’ exercise from the listing of obtainable actions. The metadata exercise can be utilized to drag the metadata of any recordsdata which are saved within the blob and in addition we are able to use that output to be consumed into subsequent exercise steps.

I’ve clicked and dragged the Get Metadata exercise onto the canvas after which renamed it as File_Name.

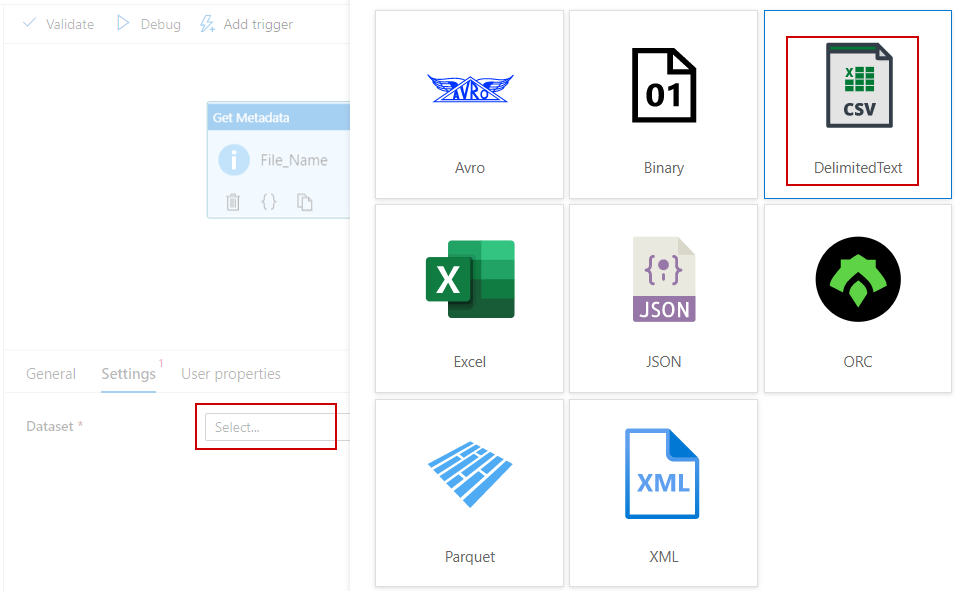

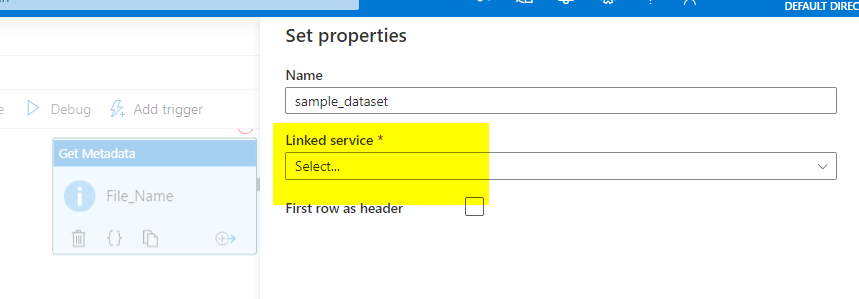

Create a Linked service pointer to the dataset in case you have not carried out that already.

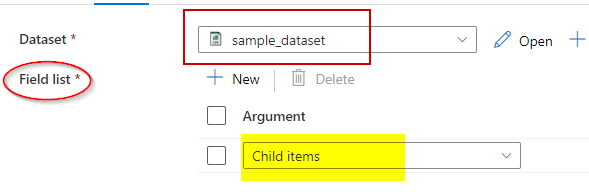

As soon as linked server is chosen it’s important to create a brand new Area listing. The Filed listing offers you the choice to loop by means of the contents contained in the storage folder when utilizing the Baby Gadgets dropdown. The Baby Gadgets is obtained from the JSON output of the metadata exercise, which is a vital half. Equally there are different choices accessible which can be utilized primarily based in your requirement.

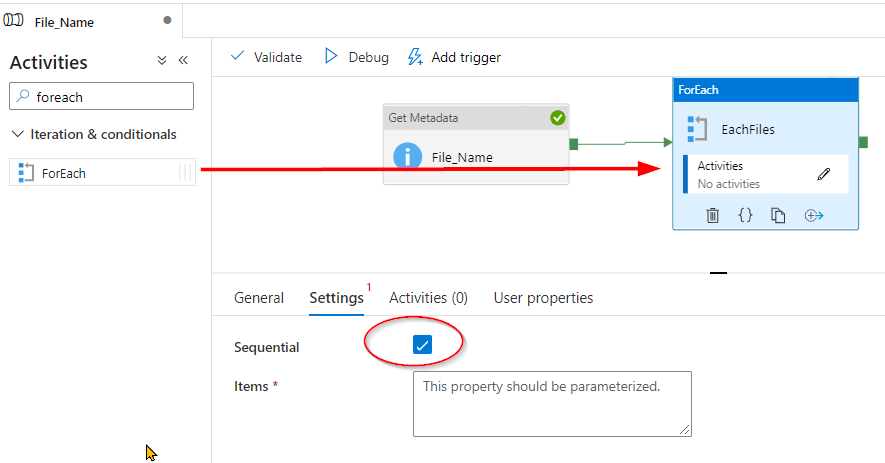

As soon as carried out now it’s time to make use of ForEach parameter to loop by means of every filename and replica that into the output. Be certain that to examine field the sequential parameter as it should assist to iterate recordsdata one after the other.

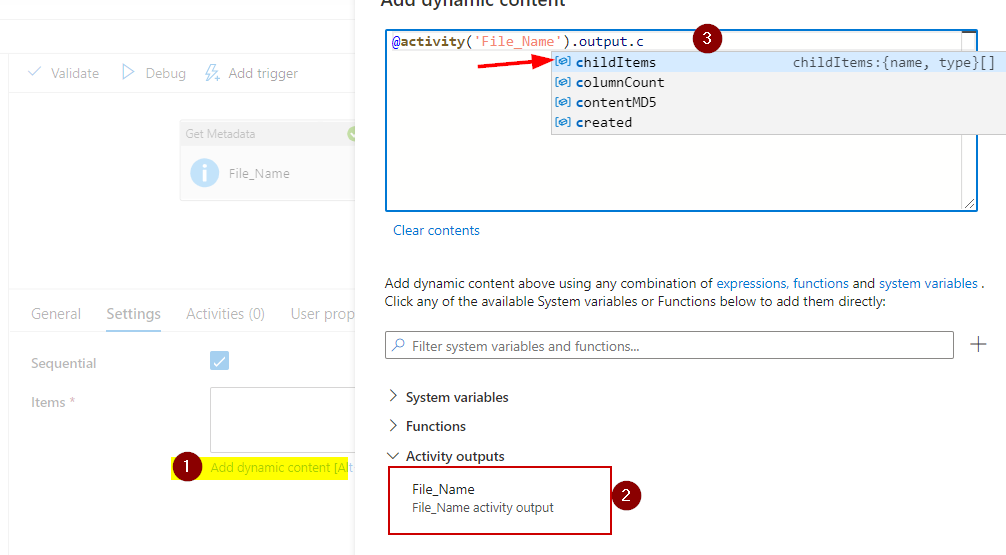

The Gadgets is the place you’ll go the filenames as array after which foreach loop will take over to iterate and course of the filenames.

Use the ChildItems as an array parameter to loop by means of the filenames -follow the under steps sequentially.

@exercise(‘File Identify’).output.childItems

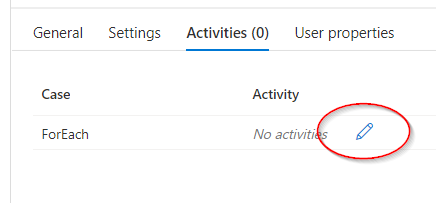

Now choose the Actions tab within the ForEach and click on on edit Exercise. That is the place you’ll point out the actions that needs to be carried out. On this demo, we’ll copy the filenames to our vacation spot location.

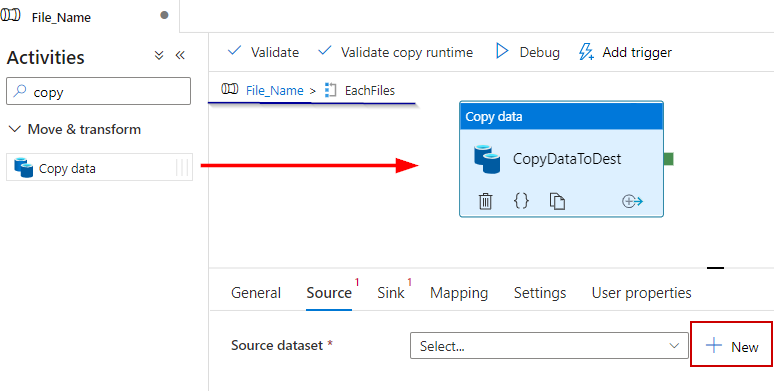

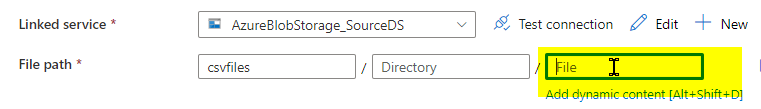

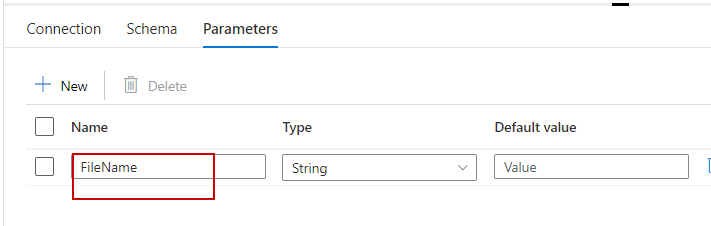

There are Supply and Sink tabs that are self-explanatory that it factors supply and vacation spot. However you merely can’t choose the linked service that we already created into the supply for the truth that that is the second a part of this demo and its exercise is to repeat dynamic filenames which are output from the first step. Therefore create a brand new dataset and choose the supply azure blob storage location as much as the folder solely leaving the file identify discipline to be parameterized dynamically.

Exercise 2 – ForEach – File Copy

Now transfer on to the ‘Parameters’ tab to create a dynamic parameter known as FileName, which might be referred to within the FilePath on ‘Connection” tab.

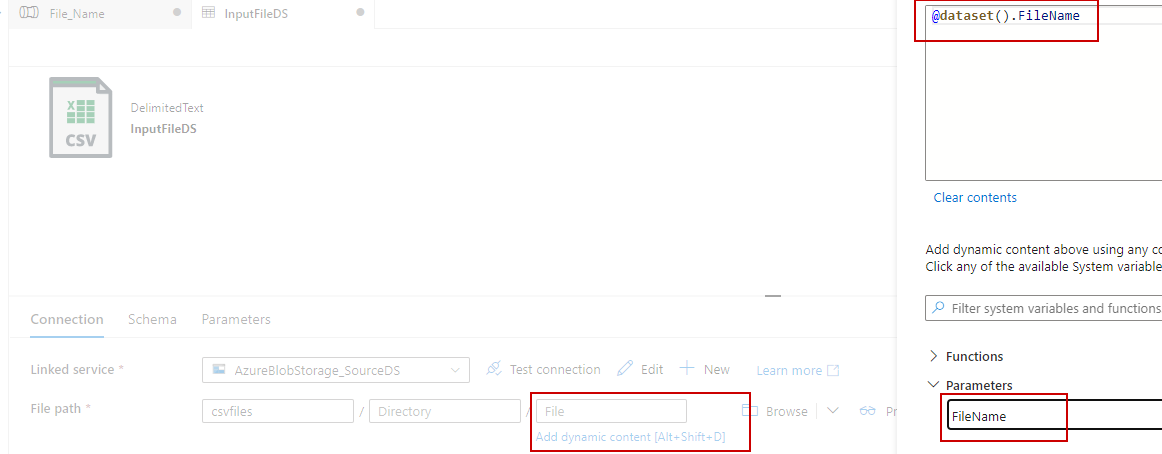

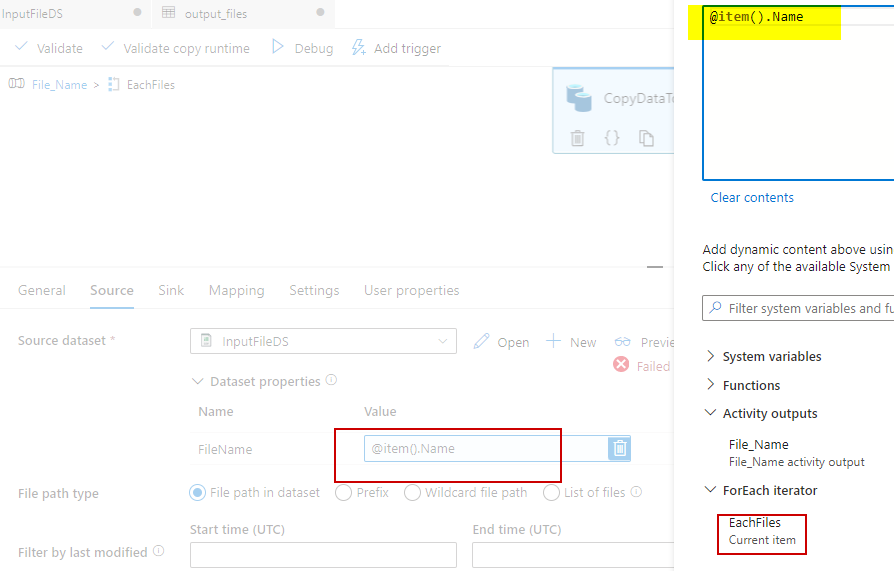

Now going again to the pipeline you can see that our newly created FileName parameter is seen within the dataset properties. Click on and create one other parameter to extract the filenames from the storage utilizing @merchandise().Identify dynamic parameter.

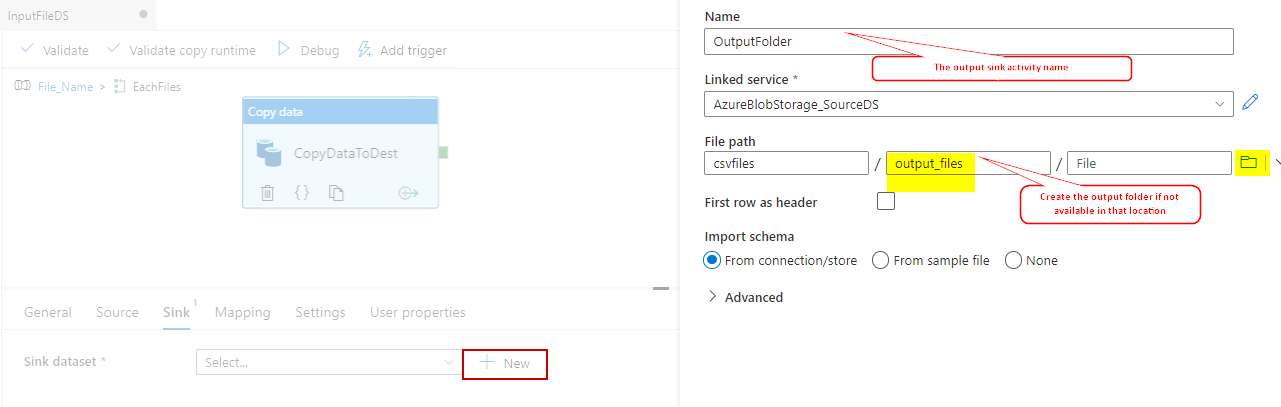

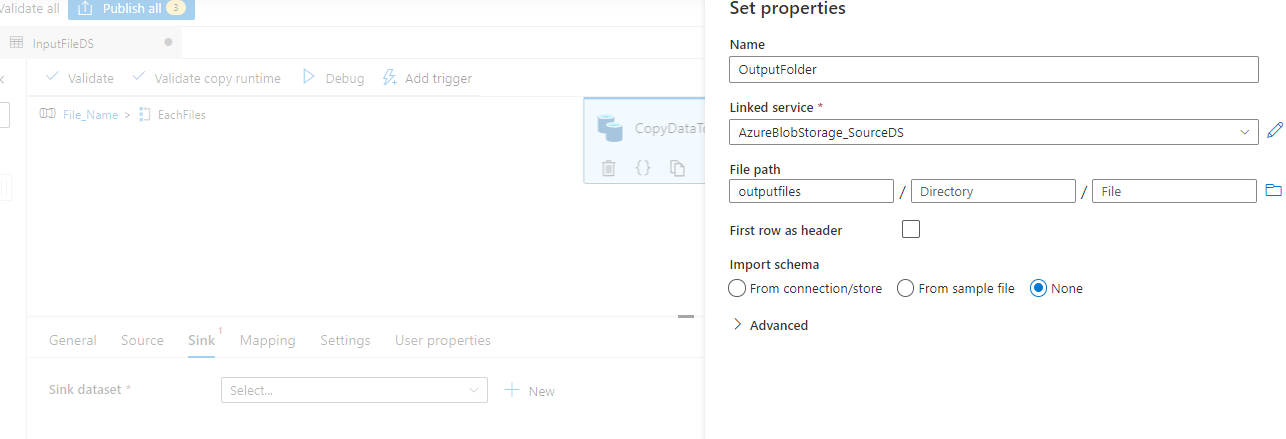

Now with the datasource configuration has been accomplished transfer on to configuring the Sink, the vacation spot folder. Confer with the folder from the supply azure blob location or kind the folder identify which you need the sink to create robotically if not accessible. Be certain that to set the Import Schema to ‘’None’, else it’d throw an error.

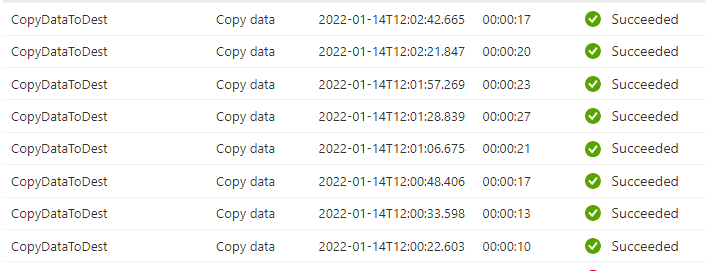

It’s now the time to check our pipeline. Go to the pipeline validate and run it and consider the output tab for the outcomes.

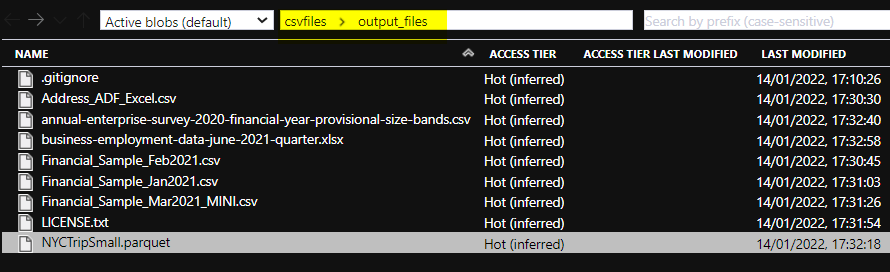

We may see the pipeline ran efficiently copying the recordsdata iteratively. See the azure blob storage folder the place all of the recordsdata have been copied from supply to vacation spot.

Abstract

The ‘Exercise 2’ of this text; the ‘File Copy’ is simply a subsequent exercise which is predicated on output from ‘Get Metadata’ exercise. I selected file copy for this demo you possibly can choose any exercise of your alternative.

References

Microsoft Official Documentation