Delete Information Primarily based On Excel Column Identify In Azure Information Manufacturing unit

Introduction

On this article, we’re going to talk about how one can choose and delete solely particular recordsdata from the ADLS listing based mostly on filenames current contained in the excel file. We’re going to see this with a real-time use case.

File deletion

Just lately I got here throughout a requirement for file deletion in ADLS. Azure Information Manufacturing unit’s delete exercise is sufficient to full this process, however the difficult half is the exercise ought to delete solely the objects which might be current within the excel ‘File Identify’ column. Allow us to see how this was achieved with a sensible demo.

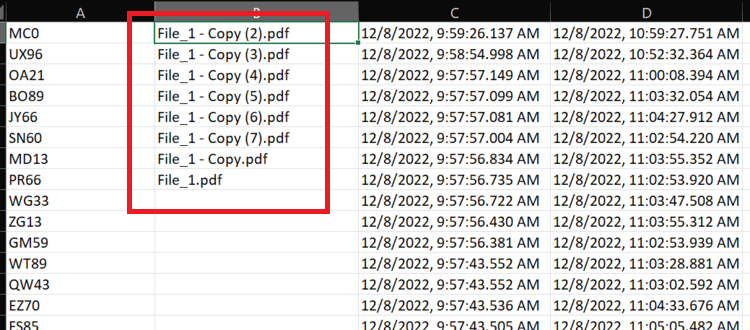

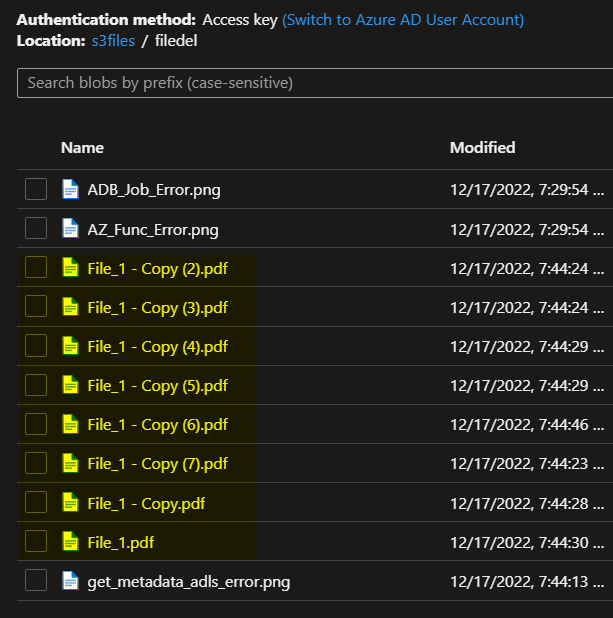

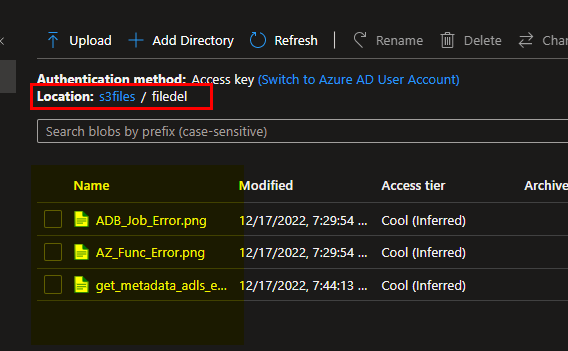

For this demo, I’m going to add few pattern recordsdata together with those which I’m making an attempt to delete right into a folder in my ADLS Gen2 listing. Aside from this I have to create an excel file that features the filenames to be deleted current in column B; like beneath. One frequent query that may arrive right here is how we will choose precisely the file title column if there are a number of columns current within the excel sheet. This may be achieved utilizing the vary choice throughout creation of the supply dataset which we are going to see within the subsequent part.

File names in column B

Steps

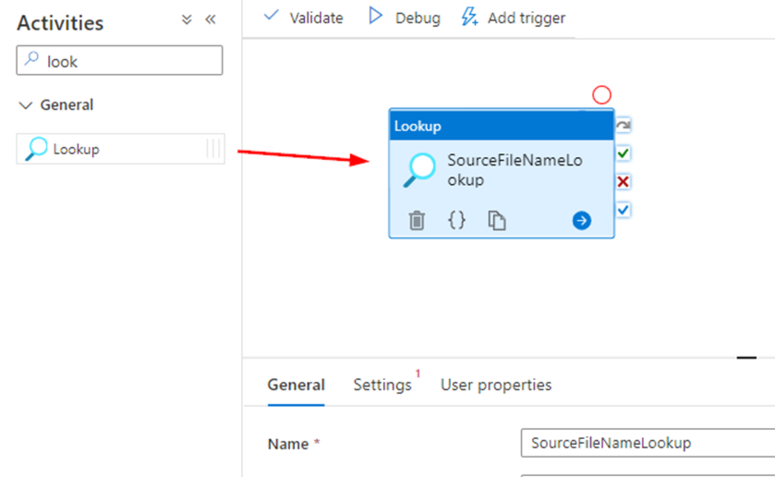

Click on and drag Lookup exercise into your ADF pipeline canvas which goes to learn out the file names that you simply wish to be deleted.

Subsequent go the settings tab and create the dataset which comprise the excel file with the filename column. Within the supply dataset choice click on on +New and set file kind as excel, then you’ll arrive on the following step.

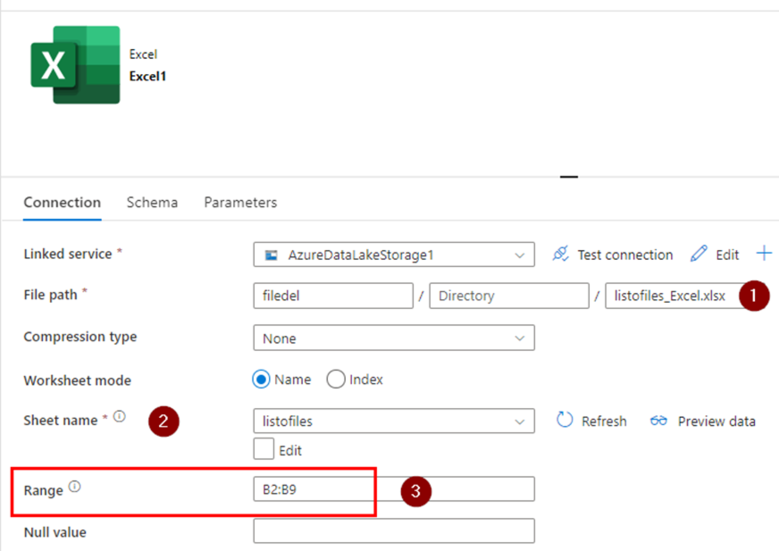

- Path of the excel file which is able to operate as supply with all of the filenames to be deleted.

- Choose the sheet title for those who excel sheet has a couple of.

- Vary is the one which you’ll be able to point out to select the cells beginning and ending together with the row and column title. In my case for those who may scroll up the excel sheet screenshot you may see the filenames are current from column B cell 2 by 10, therefore I’ve marked it as a spread. On this method it can learn solely the precise knowledge ignore all different which we don’t want.

In vary subject, I’ve restricted It to sure rows solely as I do know which column and up till which row the file names could be and that it’ll not be altering. In case you’re going to append or delete the file names into the excel in future you may go away the vary subject open as ‘B2’ as an alternative of ‘B2:B9’.

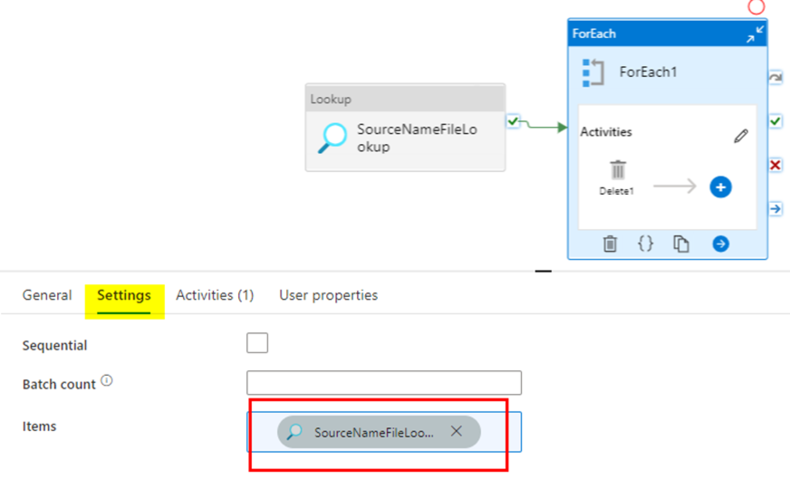

Now after the dataset is ready, we’re going to move the output of the lookup exercise into the foreach exercise. As soon as below the foreach ‘settingsàobjects’ tab enter the next expression for passing the output from lookup exercise.

@exercise('SourceNameFileLookup').output.worth

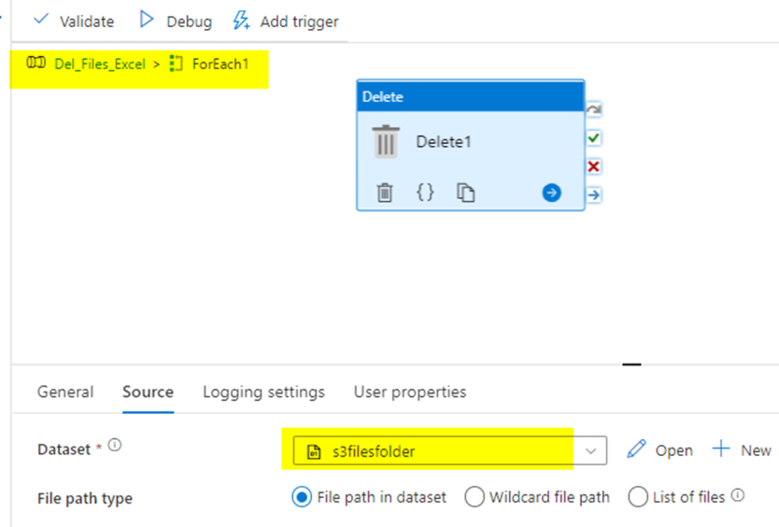

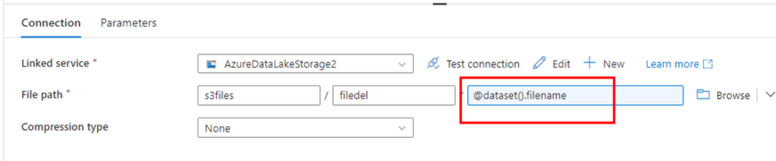

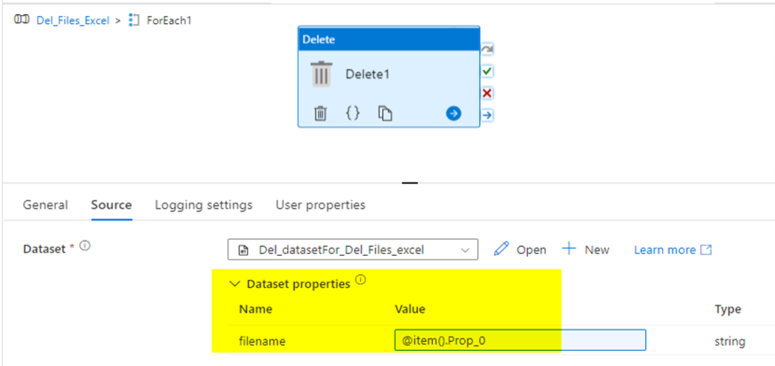

Contained in the foreach exercise, create a delete exercise and set the dataset pointing in the direction of the folder the place you wish to delete the recordsdata from like beneath.

To this point we have now setup pipeline however didn’t move the file title from the foreach to the delete exercise. With the present setup the delete exercise will delete your complete folder containing the recordsdata that we’re trying to find. Therefore we have now to ensure we have now handed the filename variable to the filename parameter within the delete exercise to ensure solely the recordsdata are deleted.

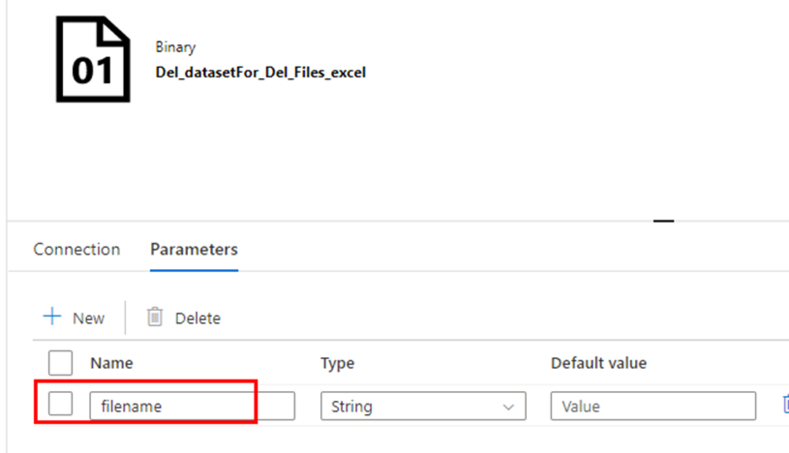

Within the dataset utilized by the delete exercise, create a string variable known as ‘filename’ and move it on to the filename subject of the dataset File path.

Within the supply settings of the delete exercise, I’m calling out the column title of the excel file that we’re wanting up initially. The column title is ‘Prop_0’ which I selected to depart it as a system generated one, in case your lookup file has headers you may point out within the dataset and name out right here.

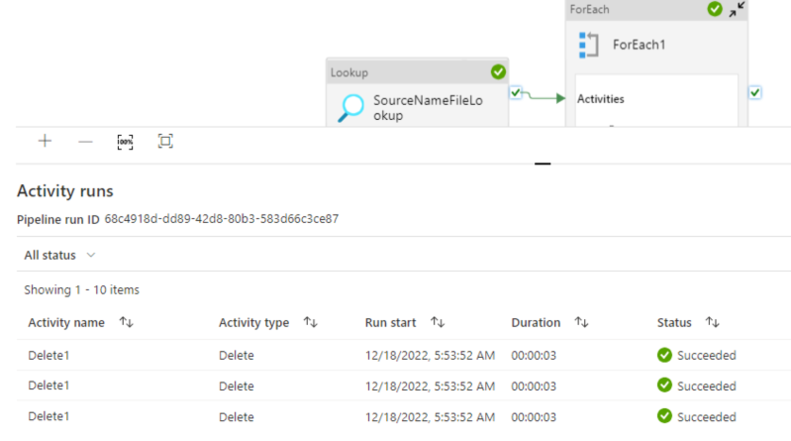

The pipeline is now prepared, lets run it to see if we’re getting the recordsdata deleted.

The pipeline has been triggered and accomplished efficiently.

We will see it has deleted all of the recordsdata in comparison with out enter within the excel column and beneath are the one recordsdata these are left after the exercise.

Abstract

A lot of the tasks has this requirement to clean-up particular set of stale recordsdata to cut back cloud value from the blob based mostly on title/kind referring them straight from a file relatively than enter them manually. The above steps would come helpful in such situations.

Hope this helps, let me know for any questions.