Creating Exterior DataSource in Azure Synapse Analytics

At present we are going to examine the best way to create an exterior information supply to entry information saved in different assets. If you happen to may bear in mind, in considered one of our earlier articles we have now mentioned that there can be a Logical Knowledge Warehouse (LDW) which is able to work just like a database that you may see in azure synapse analytics. Though bodily it could seem as a database it is just a digital layer which may maintain information from varied exterior sources and permits customers to question from synapse. That is additionally known as as Knowledge Virtualization. In SQL Server database this function is known as as Polybase by means of which the database customers can question the exterior sources or exterior databases.

Historically the Knowledge’s from a single or a number of information sources like ADLS, API, IOT, Database and many others., are collected by making a ETL pipeline after which is saved into a knowledge warehouse which is used to question the info. Whereas in Knowledge Virtualization you don’t must design pipelines to drag information after which get it saved into native storage, you may question the exterior assets immediately by means of regular SQL question. And one of the best factor is consumer by no means feels that he’s accessing an exterior DataSource, it’s just like accessing information which might be saved regionally.

Let’s see how Exterior Knowledge Supply might be created.

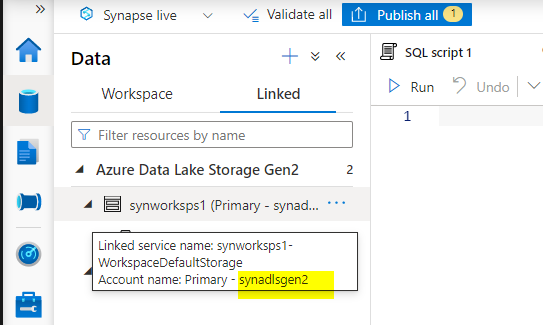

I’m using the Synapse workspace which I created for my final article right here. As you recognize I’ve an ADLS gen2 storage that’s created and mapped to this and this account is which we’re going to use to create an exterior information supply. You will get the title of the account while you hover the mouse over it and we going to make use of it to immediately question the info current in it by means of SQL question.

We will create exterior information supply utilizing SQL question, now go to the develop tab on the left and create a brand new SQL script.

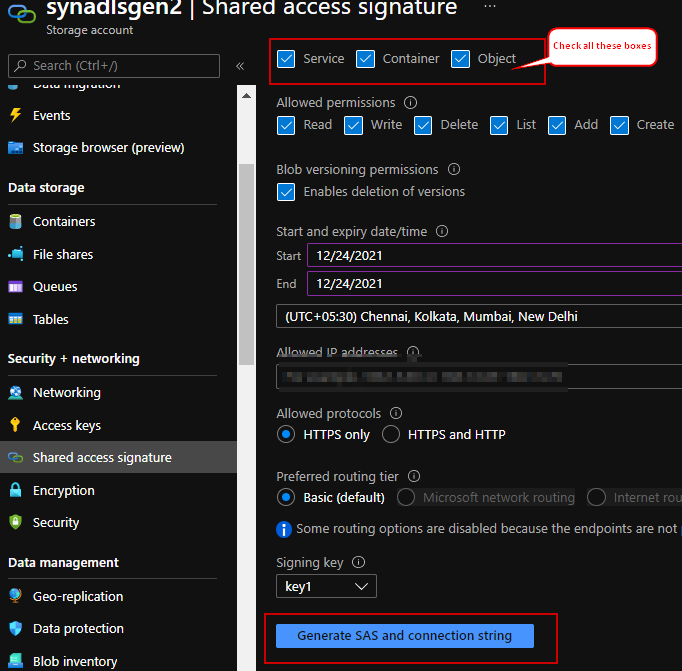

Please be aware that earlier than creating the Exterior DataSource you need to create the Database Scoped Credential which in-turn requires Grasp Key to be created as effectively. To seek out the SAS token that has to entered within the SECRET key, please refer the under screenshot and generate your SAS and connecting string. Remember that these secret tokens include expiry time, in case you plan to make use of it later enter the From/To time as per your requirement.

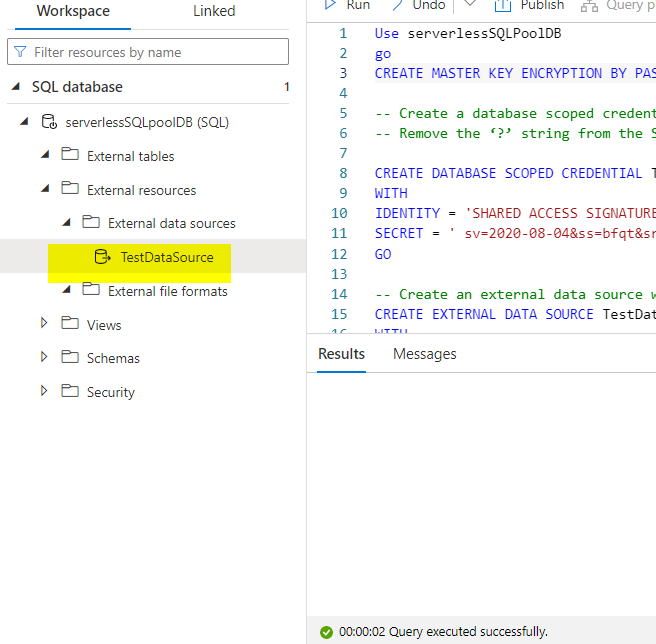

The next question consists of the three obligatory steps which might be wanted for creating the Exterior Knowledge supply. I’ve run the question towards the ‘serverlessSQLPoolDB’ database that we have now created in considered one of our earlier article.

-- Create a database grasp key if one doesn't exist already, utilizing your individual password. -- -- This secret is used to encrypt the credential secret in subsequent step.

Use serverlessSQLPoolDB

go

CREATE MASTER KEY ENCRYPTION BY PASSWORD = 'Password' ;

-- Create a database scoped credential with Azure storage account key as the secret.

-- Take away the ‘?’ string from the SAS token when getting into in the SECRET

CREATE DATABASE SCOPED CREDENTIAL TestCred

WITH

IDENTITY = 'SHARED ACCESS SIGNATURE' ,

SECRET = 'xxxxxsv=2020-08-04&ss=bfqt&srt=rwdlacupx&se=2021-12-24T14:46:50Z&st=2021-12-24T06:46:50Z&spxxxxxr=https&sig=dO2eRMGxFb0BMOstcxIpDW8k3BTru1Psly70chpercent2Bg8Dopercent3D';

GO

-- Create an exterior information supply with CREDENTIAL possibility.

CREATE EXTERNAL DATA SOURCE TestDataSource

WITH

(LOCATION = 'https://synadlsgen.dfs.core.window.internet/',

CREDENTIAL = TestCred) ;

As soon as the question run efficiently, refresh the Exterior Knowledge Sources to see the newly created datasource.

Abstract

That is how one can create a knowledge supply to entry information’s from exterior storage with out shifting them regionally.

Reference

Microsoft official docs