Configuring An ADF Pipeline Exercise Output To File In ADLS

Introduction

At an enterprise stage each venture schedule and runs a number of ADF pipelines however monitoring their outcomes in ADF studio is a cumbersome course of at present. After your pipeline exercise has thrown some error, you’ll have to observe them one after the other till you discover the failed one and click on on its output button to see the error. On this article, we are going to see the sensible method to configure the output of a pipeline exercise to a file in Azure blob storage.

Configuring an ADF Pipeline exercise Output to File in ADLS

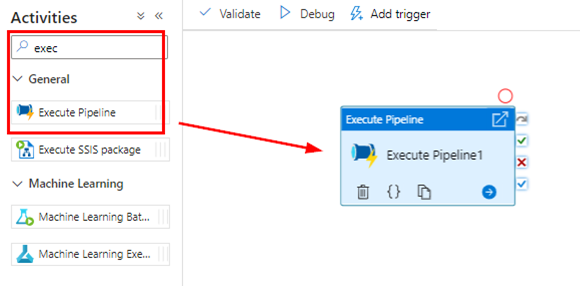

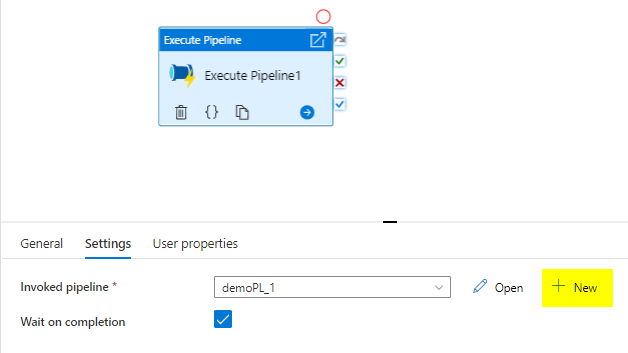

Open azure information manufacturing facility studio from azure portal and create a demo pipeline. As soon as created drag an ‘Execute Pipeline’ exercise onto the canvas inside which we’re going to configure the primary exercise.

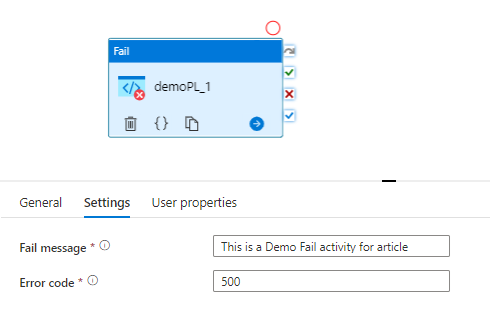

I’ve created a brand new pipeline with failed exercise only for this demo, which might be invoked inside our first pipeline.

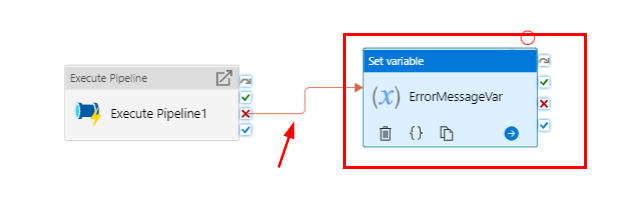

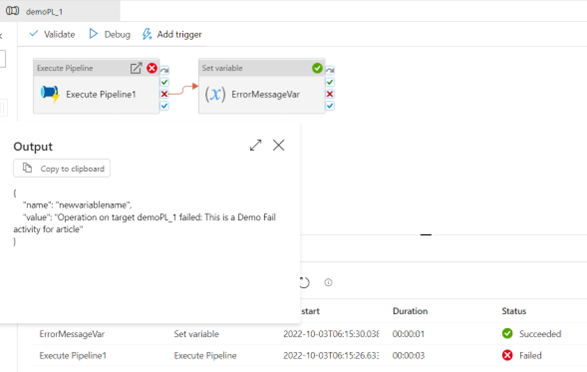

After this, I need to seize the error message that will get thrown within the ‘fail exercise’ right into a variable, therefore I’ll pull the ‘set variable’ exercise to the canvas. After this join each the actions however from “On failed” motion from the Exec pipeline1.

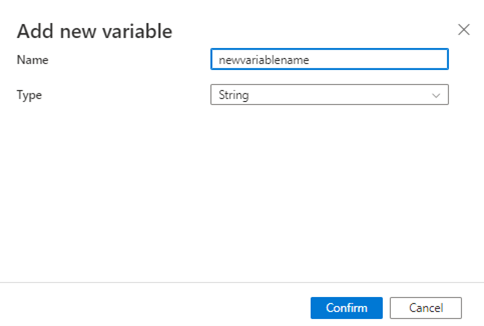

For the set variable exercise add a brand new variable identify and sort as string.

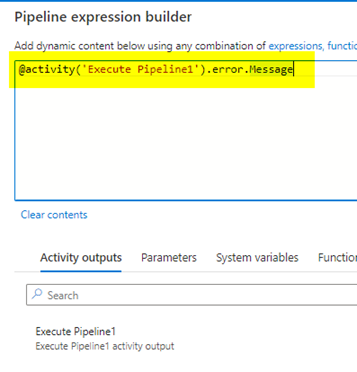

The worth parameter is the place we are going to seize the output of the error that we’re going to obtain from the pipeline. Initially, I added the final a part of the beneath dynamic content material as output.error.Message which was inflicting me error. I’ve offered the precise question which you should use and save time from fixing the error and code correction.

@exercise(‘Execute Pipeline1’).error.Message

Now once I run the pipeline it captures the error output message within the variable that now we have created.

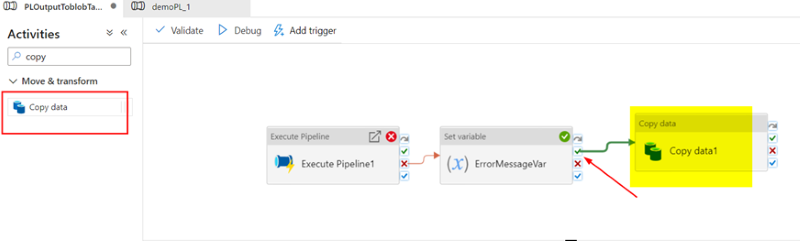

Now all set, now let’s transfer the error messages onto the textual content file in adls as per the intention of this text. For this, I’m going so as to add yet another exercise, the Copy exercise on the finish of our pipeline.

This can copy the variable that’s held within the set variable exercise which is able to run previous to this right into a textual content file in adls storage. Within the supply, I’ve pointed to a dummy CSV file with solely column headers in order that I can cross the variable alongside within the CSV file to the textual content file however filter solely the variable column in it. The subsequent steps will clarify how this may be achieved.

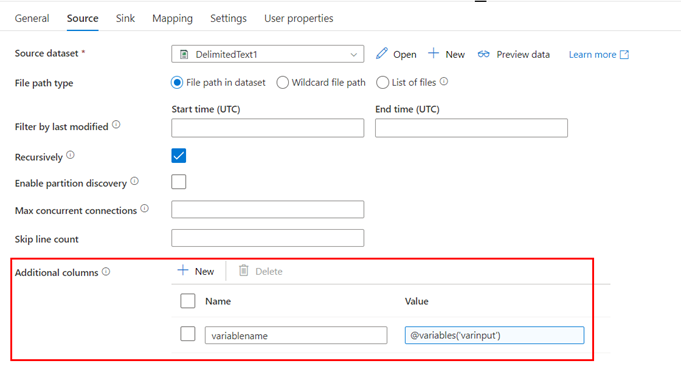

Within the supply simply add a further column(which goes to be written within the vacation spot textual content file) and add the variable identify that we set within the earlier step.

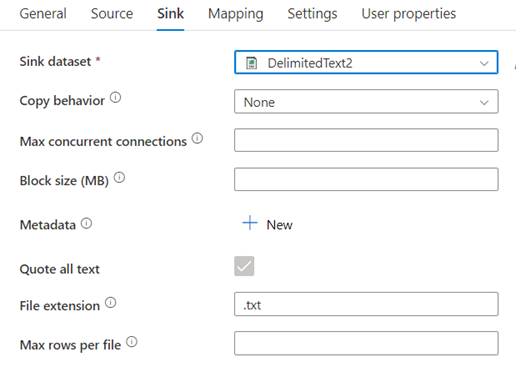

Sink would be the location the place you need the output to be saved.

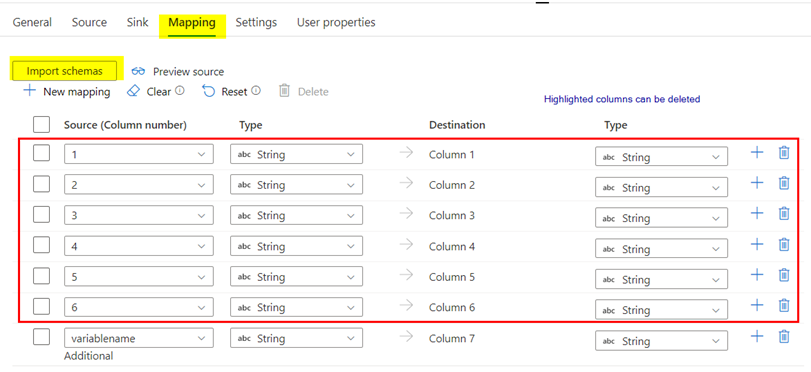

Now within the mapping tab click on on import schemas and import all of the columns, you will note the columns from supply file together with the extra column which you may have set in supply tab. Now go forward and delete all of the columns from supply and preserve solely the variable column which goes to jot down the output onto the textual content file.

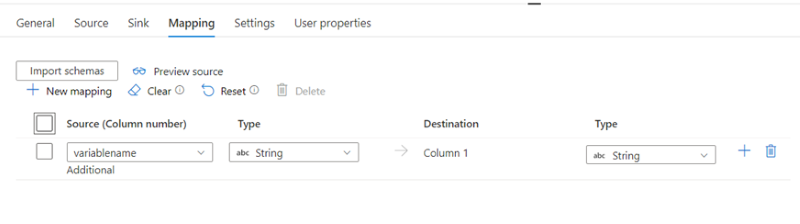

Upon getting deleted, it’ll seem like beneath

This pipeline is all set to be run, let’s go forward and set off it.

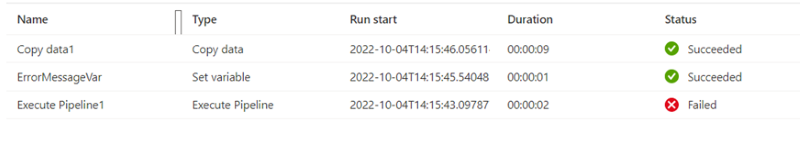

We may see step one reveals failed which is anticipated behaviour as now we have arrange a failure exercise inside it. Let’s examine the vacation spot adls storage for the file that has been created and the contents.

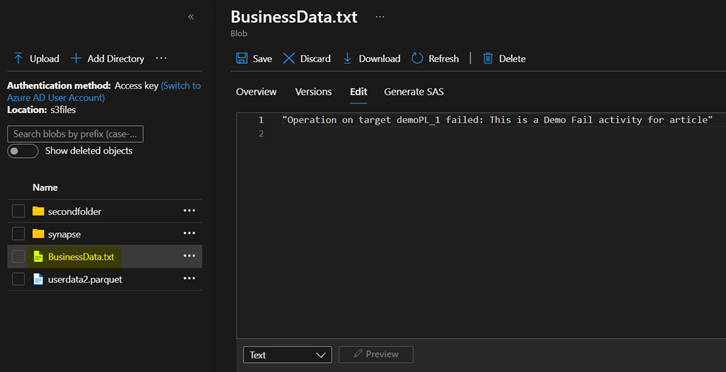

The file has efficiently been written to a txt file as BusinessData.txt in my vacation spot folder with the correct error message.

Abstract

At present we realized how the ADF pipeline error message could be written over to a textual content file in a storage location so we are able to save time on clicking every file for exercise outputs.